The question in the book world today is how far A.I. has already entered the system without editorial teams catching it in time. The controversy around Shy Girl threw that question wide open, after growing allegations that the novel had been written to a significant degree with A.I. assistance, before the case ended with a decision to halt its U.S. release and discontinue its British edition.

The novel began as a self-published title, then found an audience among horror readers before making its way into commercial publishing. That path alone reveals a growing fragility in the system: books are born outside the traditional structure, then enter it later on the strength of sales and attention, while screening and verification tools remain slower than that flow.

The issue is not just one novel, but what this case represents. Reaching this stage of the editorial process, then actually entering the market, shows that the publishing industry still lacks a stable and publicly defined mechanism for dealing with texts that may be written wholly or partly by A.I.

Detail

The suspicion did not come out of nowhere. Many readers pointed to strained metaphors, repetitive phrasing, emotional excess, and logical gaps within the text. Then multiple technical reviews deepened the doubts, after identifying linguistic patterns commonly repeated in writing produced by large language models. When those indicators recur from more than one angle, they become enough to unsettle both the publisher and the market.

More importantly, the author denied using A.I. to write the novel itself, and said that a person she hired to edit the self-published version was the one who used it. Here, the publishing industry’s new dilemma becomes clear: even when the writer does not admit direct use, the boundaries of intervention remain blurry. Does A.I.-based editing count as part of the writing? And does a text remain original if it is later reshaped by machine?

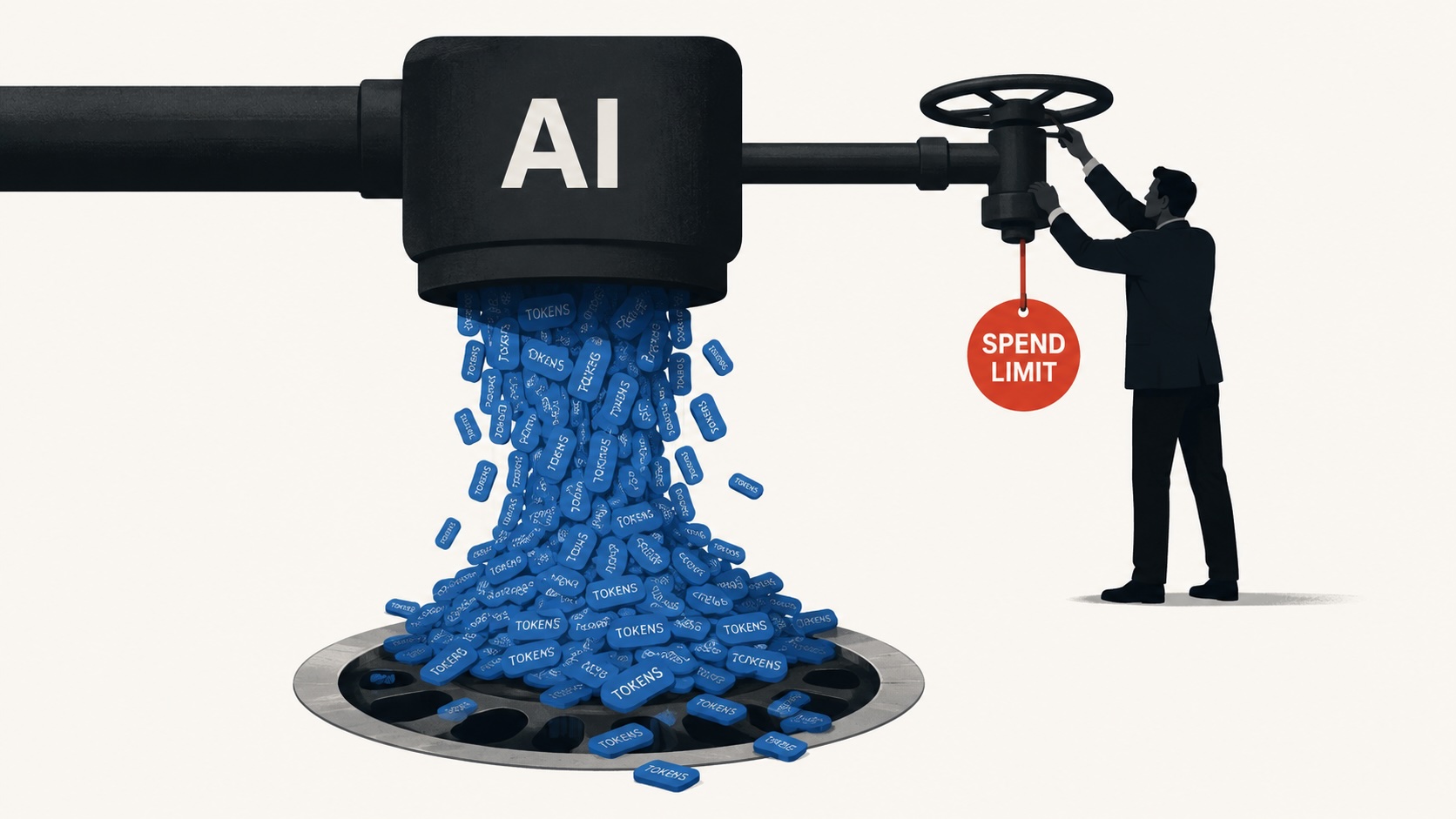

That ambiguity cuts to the heart of the industry. Most contracts do not impose a detailed and explicit ban on A.I. use. Instead, they rely on a broad clause about the originality of the work. But that clause is no longer enough on its own in an era when a writer can ask a machine to suggest plot twists, rewrite chapters, or polish drafts, then present the outcome as original work.

At the same time, many publishers do not want to shut the door completely. Some uses are now seen as practical within the industry, from marketing and translation to support tools in research and preparation. That is why the stance remains hesitant: neither full acceptance nor total prohibition, but a gray zone that grows wider by the day.

The numbers add an even more unsettling layer. Self-publishing is expanding rapidly, the number of books is rising fast, and there are signs of a clear increase in the share of novels containing large amounts of machine-generated text. That means what was once seen as chaos limited to self-publishing platforms may gradually become a real test for traditional publishing too.

At its core, the dilemma is about trust. Commercial publishing has long been seen as the last fortress of human selection: the editor who reads, cuts, refines, and ensures the text has passed through an expert eye. But if the machine can now produce writing convincing enough to cross that gate, then the value of that gate itself comes into question.

Then there is the ethical dimension. For many writers, this is not just about a new tool, but a matter of principle. Some see the use of A.I. in fiction as a form of cheating if readers are not told. Others see it as an extension of an earlier theft, since many of these models were trained on protected works without clear permission.

What next?

The industry is moving toward a moment of reckoning it does not yet seem ready for. What is needed now is no longer broad language about originality, but precise rules defining what is allowed, what is banned, what must be disclosed, and when a text stops being fully human. Without those boundaries, publishing will keep reacting case by case instead of operating under a clear policy.

In the meantime, the hardest question may not be legal alone. When a reader opens a new novel and feels pulled in by its language and rhythm, will they still be able to trust that what they are reading came from a human experience, and not from a machine that has simply learned to imitate one well? At that point, the issue becomes cultural at its core.